Over the past few evenings, I’ve been playing with SQLIO, to get an idea of how SSD compares to a couple of servers (one quite old, one a bit newer) that I have access too.

SQLIO can be used to do performance testing of an IO subsystem, prior to deploying SQL Server onto it. It doesn’t actually do anything specifically with SQL, it’s just IO.

If you haven’t looked at SQLIO, I would highly recommend looking at these websites:

http://www.sqlskills.com/BLOGS/PAUL/post/Cool-free-tool-to-parse-and-analyze-SQLIO-results.aspx

http://tools.davidklee.net/sqlio/sqlio-analyzer.aspx

The SQLIO Analyser, created by David Klee, is amazing. It allows you to run the SQLIO package (a preconfigured one is available on the site) and submit the results. It then generates an Excel file that contains various metrics. It’s nice!

Running on my Laptop…

Having run the pre-built package on my laptop, I got the following metrics out of it. As you can see, it’s an SSD (Crucial M4 SSD), and pretty nippy.

Interesting metrics here, and one of the key benefits of an SSD, is that regardless of what you are doing, the average latency is so low. For these tests, I was getting:

| Avg. Metrics | Sequential Read | Random Read | Sequential Write | Random Write |

| Latency (ms) | 19.28ms | 18.38ms | 23.21ms | 51.51ms |

| Avg IOPs | 3777 | 3493 | 2930 | 1340 |

| MB/s | 236.07 | 218.3 | 183 | 83.7 |

Running on an older server

So, running this on an older server, connected to a much older (6-8+ years old) SAN gave me these results. You can see that the metrics are all much lower, and there is a much wider spread of for all the metrics, and that is down to the spinning disks.

As you can see from the metrics below, there is a significant drop in the performance of the server, a lot more variance across the load types.

| Avg. Metrics | Sequential Read | Random Read | Sequential Write | Random Write |

| Latency (ms) | 24.81ms | 66.79 | 373 | 260 |

| Avg IOPs | 1928 | 710 | 186 | 210 |

| MB/s | 120 | 44.3 | 11.6 | 13.14 |

Slightly newer Server

So, next I had the SQLIO package running on a slightly newer server (with a higher spec I/O system, I was told), which gave the following results.

As expected, this did give generally better results, it is interesting that Sequential read had better throughput on the older server.

| Avg. Metrics | Sequential Read | Random Read | Sequential Write | Random Write |

| Latency (ms) | 35.13 | 44.17 | 41.81 | 77.44 |

| Avg IOPs | 1474 | 1021 | 1314 | 794 |

| MB/s | 92.7 | 63.8 | 82.8 | 49.6 |

Cracking open VMware

Since I use VMware Workstation for compartmentalising projects on my laptop, I thought I’d run this against a VM. The VM was running on the SSD (at the top of the post), so I could see how much of an impact the VMware drivers had on the process. This gave some interesting results, which you can see below. Obviously there is something screwy going on here, it’s not likely that the VM can perform that much faster than the drive it’s sitting on. Would be nice if it could though…

| Avg. Metrics | Sequential Read | Random Read | Sequential Write | Random Write |

| Latency (ms) | 7.8 | 7.5 | 7.63 | 7.71 |

| Avg IOPs | 12435 | 13119 | 15481 | 14965 |

| MB/s | 777 | 819 | 967 | 935 |

While the whole process was running, Task manager on the host machine was sitting at around 0-2% for disk utilisation, but the CPU was sitting at 50-60%. So, it was hardly touching the disk.

Conclusion

Just to summarise this, in case you didn’t already know, SSD’s are really quick. For the testing I was doing, the SSD was giving me approx. double the performance from some pretty expensive hardware (or at least it was 5-10 years ago…)

Also, take your test results with a grain of salt.

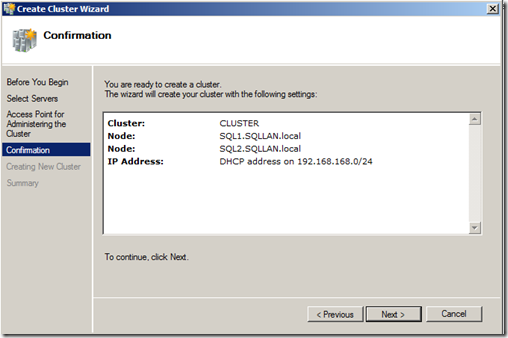

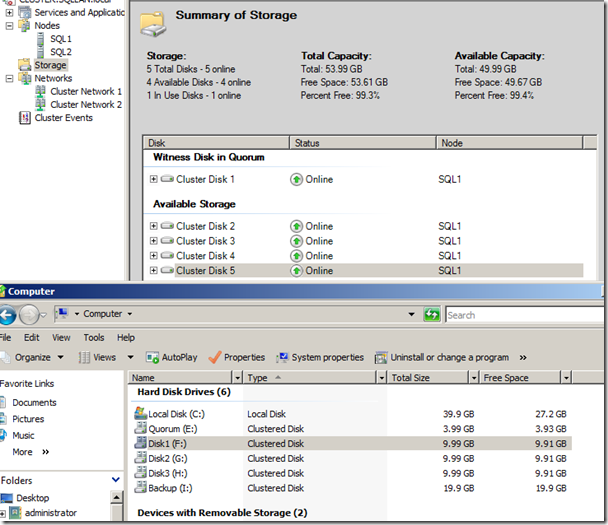

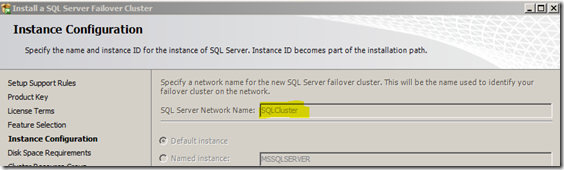

As can be seen in the proposed architecture diagram to the left, we have a Shared Storage device, and next we’ll be setting up the Windows Servers, then adding the two SQL Server boxes together to create a cluster.

As can be seen in the proposed architecture diagram to the left, we have a Shared Storage device, and next we’ll be setting up the Windows Servers, then adding the two SQL Server boxes together to create a cluster.